Memory isn’t cheap! Despite the falling costs and increasing sizes of DRAM DIMMS, it’s still damned expensive compared to most non-volatile media at a price per GB. What’s more frustrating is that often you buy all of this expensive RAM, assign it to your applications, and find later through detailed monitoring, that only a relatively small percentage is actually being actively used.

For many years, we have had technologies such as paging, which allow you to maximise the use of your physical RAM, by writing out the least used pages to disk, freeing up RAM for services with current memory demand. The problem with paging is that it is sometimes unreliable, and when you do actually need to get that page back, it can be multiple orders of magnitude slower returning it from disk.

Worse still, if you are running a workload such as virtual machines and the underlying host becomes memory constrained, a hypervisor may often not have sufficient visibility of the underlying memory utilisation, and as such will simply swap out random memory pages to a swap file. This can obviously have significant impact on virtual machine performance.

More and more applications are being built to run in memory these days, from Redis to Varnish, Hortonworks to MongDB. Even Microsoft got on the bandwagon with SQL 2014 in-memory OLTP.

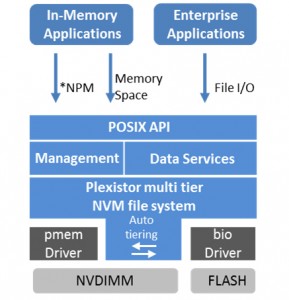

One of the companies we saw at Storage Field Day , Plexistor, told us that can offer both tiered posix storage and tiered non-volatile memory through a single software stack.

The posix option could effectively be thought of a bit like a non-volatile, tiered RAM disk. Pretty cool, but not massively unique as RAM disks have been around for years.

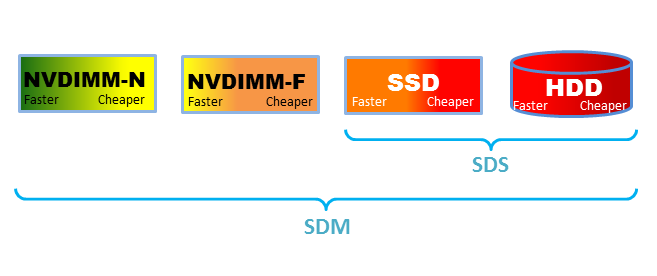

The element which really interested me was the latter option; effectively a tiered memory driver which can present RAM to the OS, but in reality tier it between NVDIMMs, SSD and HDDs depending on how hot / cold the pages are! They will also be able to take advantage of newer bit addressable technologies such as 3D XPoint as they come on the market, making it even more awesome!

All of this is done through the simple addition of their NVM file system (i.e. device driver) on top of the pmem and bio drivers and this is compatible with most versions of Linux running reasonably up to date kernel versions.

It’s primarily designed to work with some of the Linux based memory intensive apps mentioned above, but will also work with more traditional workloads as well, such as MySQL and the KVM hypervisor.

Plexistor define their product as “Software Defined Memory” aka SDM. An interesting term which is jumping on the SDX bandwagon, but I kind of get where they’re going with it…

One thing to note with Plexistor is that they actually have two flavours of this product; one which is based on the use of NVRAM to provide a persistent store, and one which is non-persistent, but can be run on cloud infrastructures, such as AWS. If you need data persistence for the latter, you will have to do it at the application layer, or risk losing data.

If you want to find out a bit more about them, you can find their Storage Field Day presentation here:

Plexistor Presents at Storage Field Day 9

Musings…

As a standalone product, I have a sneaking suspicion that Plexistor may not have the longevity and scope which they might gain if they were procured by a large vendor and integrated into existing products. Sharon Azulai has already sold one startup in relatively early stages (Tonian, which they sold to Primary Data), so I suspect he would not be averse to this concept.

Although the code has been written specifically for the Linux kernel, they have already indicated that it would be possible to develop the same driver for VMware! As such, I think it would be a really interesting idea for VMware to consider acquiring them and integrating the technology into ESXi. It’s generally recognised as a universal truth that you run out of memory before CPU on most vSphere solutions. Moreover, when looking in the vSphere console we often see that although a significant amount of memory is allocated to VMs, often only a small amount is actually active RAM.

The use of Plexistor technology with vSphere would enable VMware to both provide an almost infinite pool of RAM per host for customers, as well as being able to significantly improve upon the current vswp process by ensuring hot memory blocks always stay on RAM and cold blocks are tiered out to flash.

The homelab nerd in me also imagines an Intel NUC with 160GB+ of addressable RAM per node! 🙂

Of course the current licensing models for retail customers favour the “run out of RAM first” approach as it sells more per-CPU licenses, however, I think in the long term VMware will likely move to a subscription based model, probably similar to that used by service providers (i.e. based on RAM). If this ends up being the approach, then VMware could offer a product which saves their customers further hardware costs whilst maintaining their ESXi revenues. Win-Win!

Further Reading

One of the other SFD9 delegates had their own take on the presentation we saw. Check it out here:

Disclaimer/Disclosure: My flights, accommodation, meals, etc, at Storage Field Day 9 were provided by Tech Field Day, but there was no expectation or request for me to write about any of the vendors products or services and I was not compensated in any way for my time at the event.

RSS – Posts

RSS – Posts